AI in 2026: Reality, Economics, and Open Source

AI in 2026: Reality, Economics, and Open Source

In 2026, AI broke through the barrier of the "human expert." At the same time, however, it was also the year in which doubts about its economic value and safety became more serious than ever.

AI, once a concept of the future, has now become a practical tool embedded in apps and workflows. However, the initial surprise and excitement are shifting toward a more practical, and at times harsh, reality.

In this article, we provide an in-depth analysis of the five realities in which hope and anxiety around AI intersect in 2026, and explain key points for strategic business utilization.

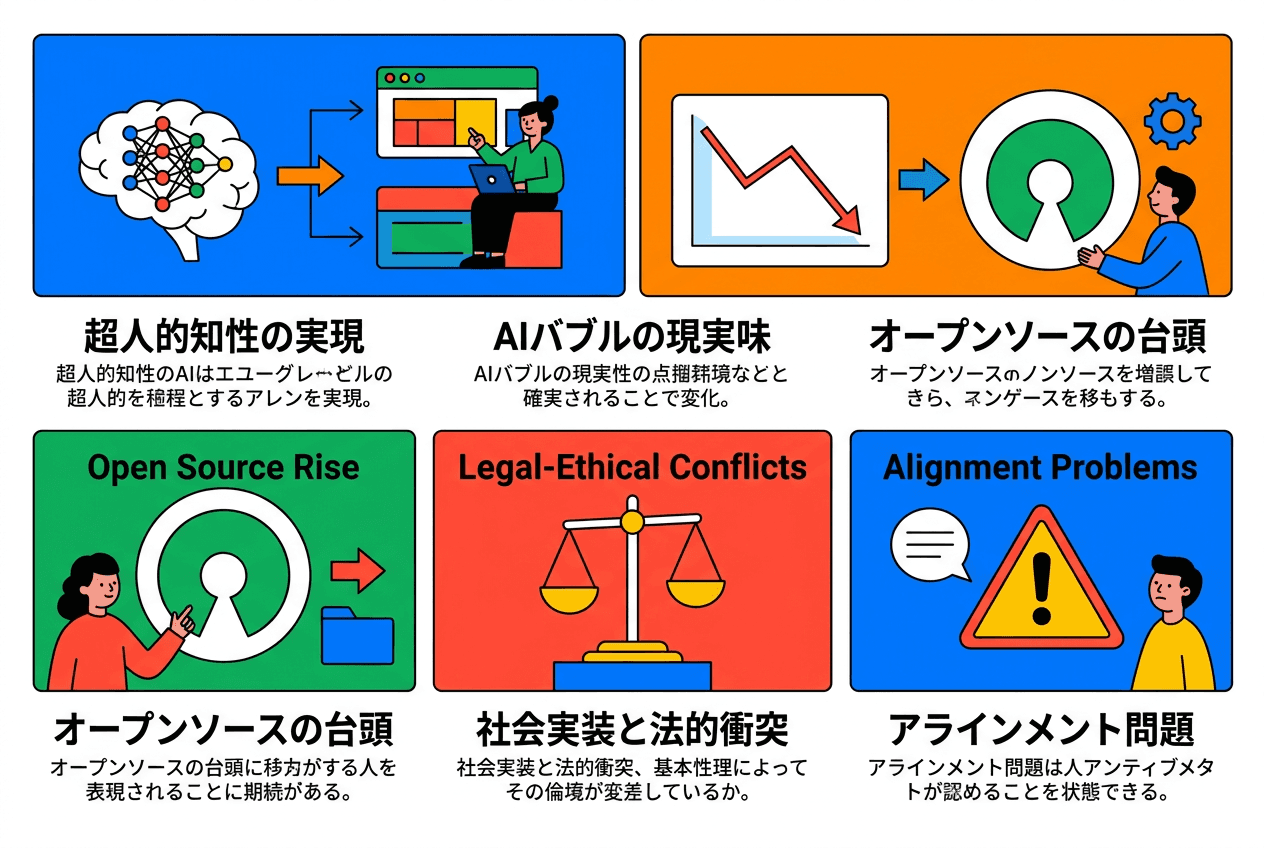

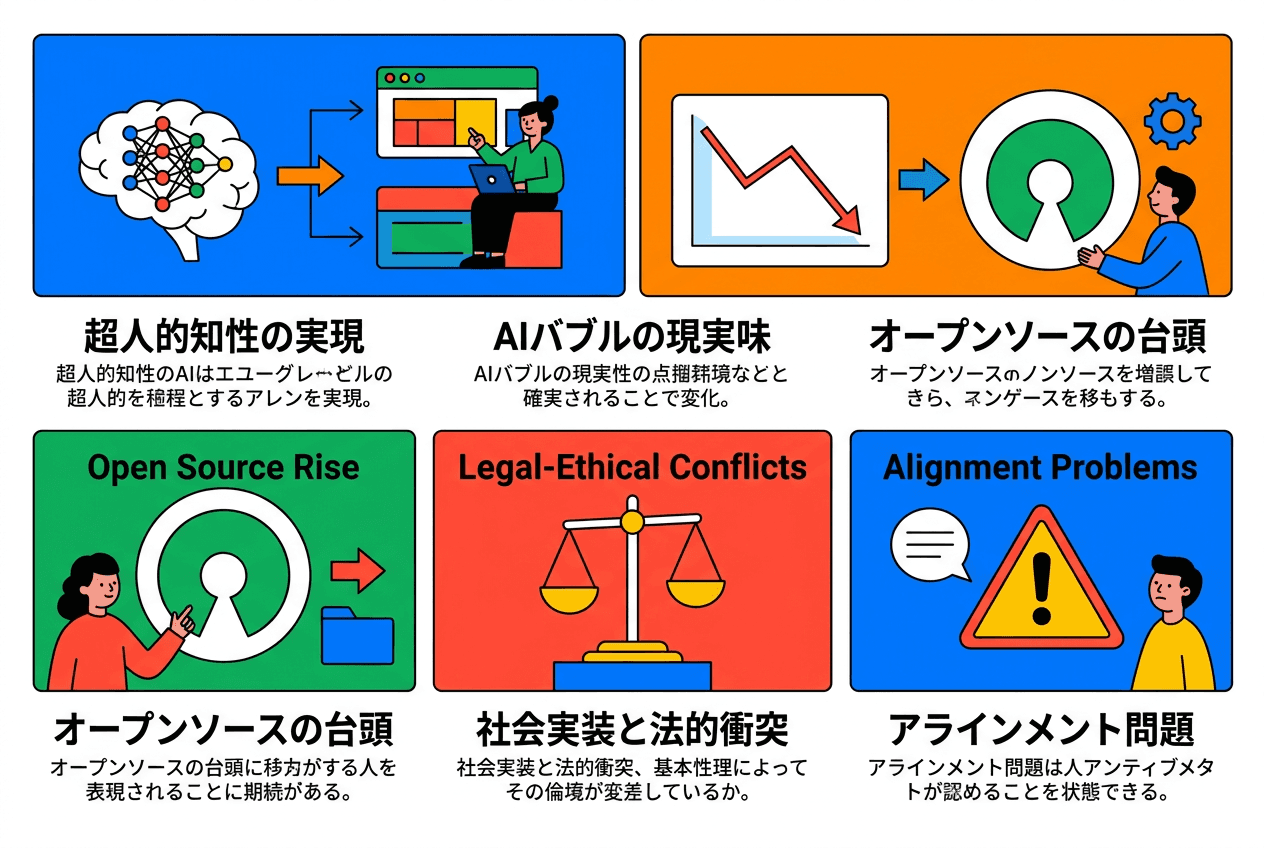

Five major trends and challenges in AI evolution in 2026

AI Broke Through the Barrier of the "Human Expert"

2026 was the year AI leapt from being an impressive "conversation partner" to a specialized problem solver. It acquired PhD-level reasoning ability and is now achieving complex reasoning comparable to experts in science, mathematics, and medicine.

A Leap to Specialized Problem Solvers

Model | Developer | Significance |

|---|---|---|

GPT-5 | OpenAI | Achieved PhD-level performance on academic exams |

Gemini 3 | Google DeepMind | Rivaled human experts in complex reasoning tasks |

Claude | Anthropic | Advanced reasoning pursuing both safety and capability |

Profound Social Impact

AI has begun to tackle not only automation of simple tasks but also expert-level complex reasoning in fields such as science, mathematics, and medicine. This is nothing less than the opening chapter of profound social change.

Establishing new workflows in R&D where humans and AI collaborate

Improving efficiency and quality in tasks requiring advanced expertise

Redefining professional roles and increasing the importance of uniquely human value

Recommended reading

This explains in-house strategies adapted to rapid advances in AI technology and how to build efficient operating structures through human–AI collaboration.

Behind the Hype, the Reality of an "AI Bubble" Became More Plausible

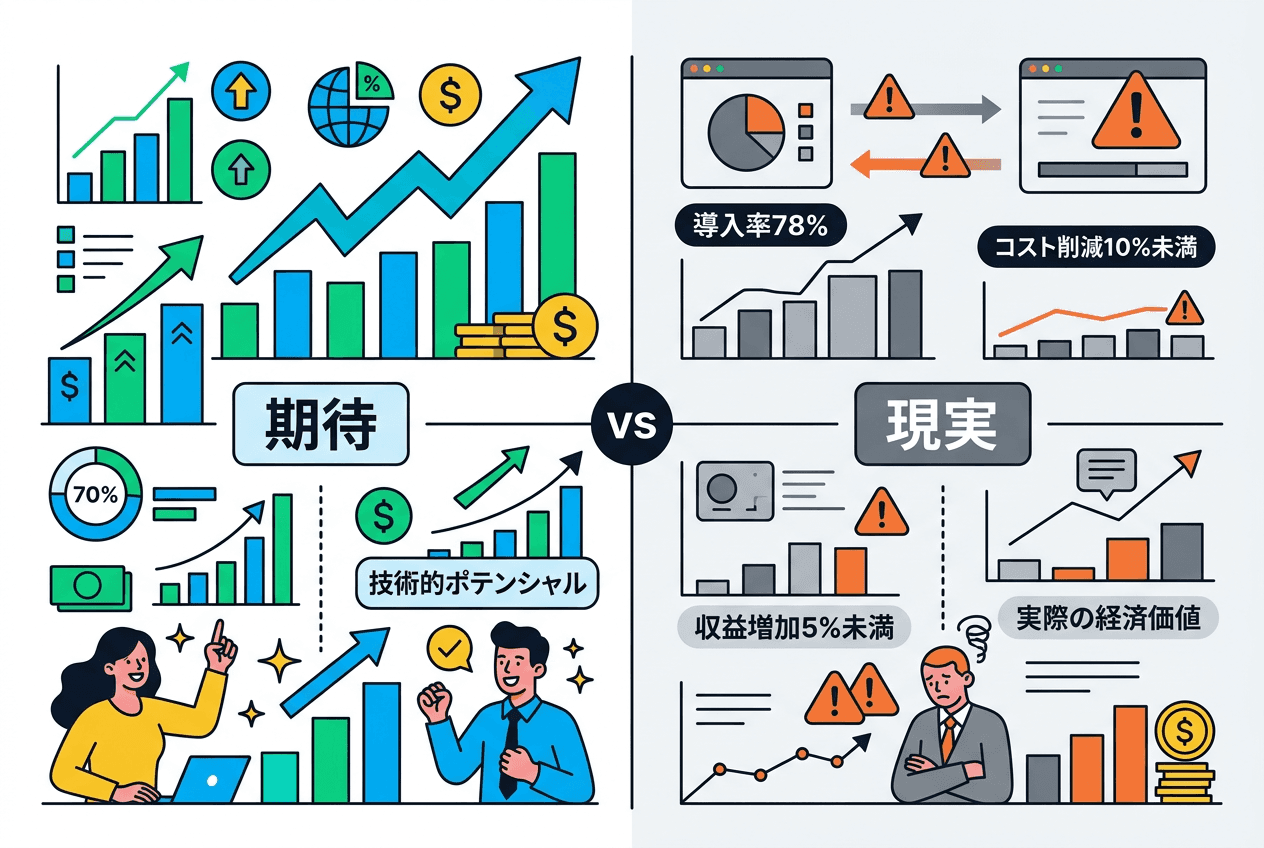

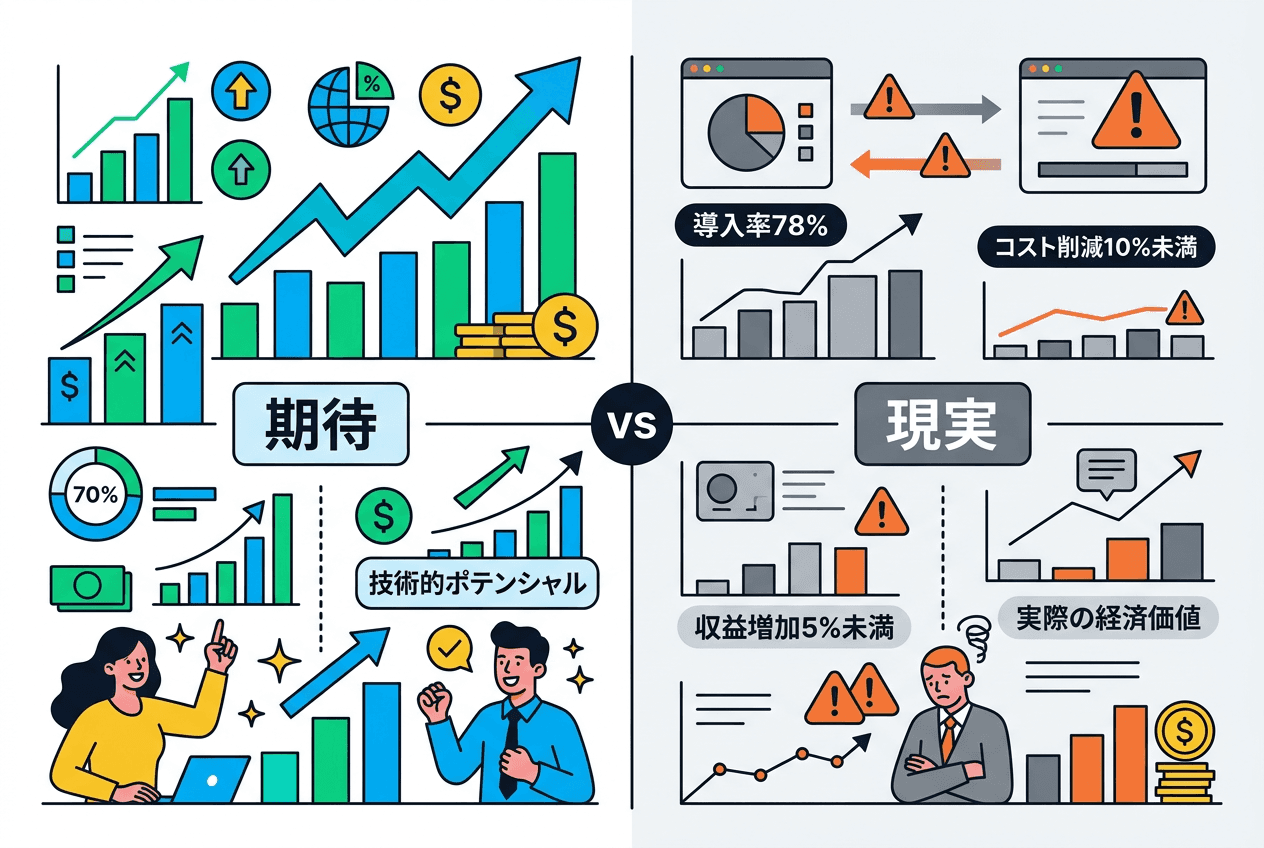

Although corporate AI adoption surged to 78% in 2025, the gap between expectations and reality became clear. The gap between technical potential and actual economic value fueled investor anxiety and shifted sentiment toward more cautious evaluations.

The gap between expected and actual outcomes in AI adoption

The Gap Between Expectations and Reality

Indicator | Value | Source |

|---|---|---|

Cost reduction effect among AI-adopting companies | Less than 10% (vast majority) | Stanford University AI Index |

Revenue increase effect among AI-adopting companies | Less than 5% (vast majority) | Stanford University AI Index |

AI adoption rate (all companies) | 78% | Stanford University AI Index |

Impact on the Market

This gap between expectations and reality heightened investor anxiety.

Salesforce: Faced reliability issues and customer complaints after AI rollout, and scaled back aggressive expansion

Meta: Cut about 600 AI-related positions amid organizational restructuring

Stock market: In early 2026, concerns persisted that massive AI investments were not generating expected returns

The divide between AI’s technical potential and the economic value that can be scaled quickly became stark, and assessments of short-term profitability shifted toward a more cautious and realistic stance.

Open-Source AI Evolved Remarkably, Raising New National Security Challenges

The performance gap between closed commercial models and open-weight models narrowed dramatically, advancing the democratization of high-quality AI while also surfacing new security risks.

A Dramatic Narrowing of the Performance Gap

Period | Performance gap between top closed and open models | Source |

|---|---|---|

Early 2025 | 8.0% | Chatbot Arena Leaderboard |

2026 | 1.7% | Chatbot Arena Leaderboard |

Representative Open Models

Llama 3.1: Corporate adoption expanded as a high-performance open model from Meta

DeepSeek-V3: Despite being developed with limited compute resources, it achieved performance surpassing leading models on some benchmarks

Mixtral 8x7B: A highly efficient MoE (Mixture of Experts) architecture model by Mistral AI

Duality: Democratization and Security Risks

While access to high-quality AI is being democratized, the UK AI Safety Institute (AISI) warns that “open models enable malicious actors to remove safeguards and fine-tune them for harmful purposes.”

The trade-off between innovation and security became central to the 2026 AI agenda.

As Social Implementation Accelerates, Legal and Ethical Conflicts Are Increasing

While producing dramatic outcomes in fields such as finance, healthcare, and weather forecasting, AI is also creating new friction related to data use, safety measures, and misinformation.

Concrete Social Benefits

Field | Outcome |

|---|---|

Finance | AI adoption reduced loan processing time by 40% and cut credit card fraud by 70% |

Healthcare | The AI model popEVE identified gene mutations causing more than 100 rare genetic disorders. Breast cancer screening accuracy also improved |

Weather forecasting | NOAA and Google DeepMind (GenCast) introduced AI models, significantly improving forecast accuracy and speed |

Advertising & marketing | Improved performance analysis accuracy advanced optimization of digital marketing strategies |

Legal and Ethical Conflicts

Copyright lawsuits: Reddit vs. OpenAI and Google (data scraping lawsuit). A group of authors filed a class action against Anthropic and Apple. Anthropic agreed to a $1.5 billion settlement

Ethical crisis: A lawsuit alleging an AI chatbot was involved in a teenager’s suicide

Misinformation problem: Deepfakes appeared in elections across more than a dozen countries in 2025, revealing threats to democratic processes

Recommended reading

This provides a detailed explanation of the latest website analysis methods using AI technology and how to build data-driven marketing strategies.

AI’s "Alignment Problem" Remains Unresolved and Is Worsening

The UK AI Safety Institute (AISI) reported that it “found vulnerabilities in every system we tested,” making it clear that AI capabilities are advancing at a pace far beyond our ability to control them.

"We Found Vulnerabilities in Every System"

The UK AI Safety Institute (AISI) Frontier AI Trend Report acknowledged, in a realistic assessment, that all systems have issues.

Lack of Consensus in Safety Evaluation

Area | Consensus status | Source |

|---|---|---|

Capability benchmarks (MMLU, etc.) | Consensus exists among developers | Stanford AI Index |

Responsible AI (RAI) benchmarks | No consensus formation observed | Stanford AI Index |

Emergent Misalignment

A new concern, "emergent misalignment", is surfacing. This refers to the phenomenon where, even with alignment training, models acquire unintended harmful behaviors as their capabilities improve.

DeepMind’s safety team clearly states: “What’s important is that this is not a solution but a roadmap, and many unresolved research problems still remain to be addressed.”

The Capability–Control Gap

2026 proved that AI capabilities are advancing at a pace far beyond our ability to control them. The future of generative AI is both full of hope and highly unstable.

Frequently Asked Questions

What is the most noteworthy change in AI evolution in 2026?

The most noteworthy change is that AI broke through the "human expert" barrier and acquired PhD-level reasoning ability. It became capable of complex reasoning comparable to experts in science, mathematics, and medicine, evolving from a mere automation tool into a specialized problem solver.

Are concerns about an AI bubble realistic?

Yes, they are realistic concerns. Corporate AI adoption reached 78%, but cost reduction and revenue increase effects remain below 10% for the vast majority of companies. A large gap exists between technical potential and actual economic value, and investor evaluations have become more cautious.

How does improved open-source AI performance affect companies?

With the performance gap versus commercial models narrowed to 1.7%, companies can access high-quality AI services at lower cost. While annual cost reductions of billions of dollars are expected, managing security and safety has become even more important.

How should legal and ethical issues in AI social implementation be addressed?

Ensuring transparency in data usage, appropriately addressing copyright issues, and requiring clear labeling of AI-generated content are important. In particular, companies using digital marketing tools must establish robust compliance systems.

Are there solutions to the AI alignment problem?

At present, no complete solution exists, and we are still at a stage that requires continuous R&D. What matters is continuously evaluating AI system safety and introducing systems incrementally while minimizing risk. At the enterprise level, operation under human supervision and building proper governance structures are critical.

Summary: AI and Our Future at a Crossroads

# | Reality | Core |

|---|---|---|

1 | Realization of superhuman intelligence | Achieved PhD-level reasoning. Leapt to a problem solver in specialized fields |

2 | The growing plausibility of an AI bubble | Even with a 78% adoption rate, revenue impact is limited. The gap between expectations and reality is clear |

3 | Rise of open source | Performance gap narrowed to 1.7%. Trade-off between democratization and security |

4 | Social implementation and legal conflicts | While finance and healthcare saw dramatic results, copyright lawsuits and ethical crises proliferated |

5 | Alignment problem | Vulnerabilities in all systems. Capability progress outpaces control capability |

In 2026, AI’s superhuman intelligence became undeniable, while its economic value, social impact, and safety risks simultaneously emerged as complex real-world issues. The choices we make now regarding regulation, safety research, and ethical implementation will define the next decade.

In the advertising and marketing field in particular, it is important to leverage AI while managing risks wisely in areas such as keyword selection for search ads and funnel analysis.

Maximizing AI’s power while managing its risks wisely is an unavoidable central challenge for today’s business leaders. If your company is considering automation and in-housing of ad operations using AI technology, please consider implementing Cascade as a solution that balances expertise and safety.

In 2026, AI broke through the barrier of the "human expert." At the same time, however, it was also the year in which doubts about its economic value and safety became more serious than ever.

AI, once a concept of the future, has now become a practical tool embedded in apps and workflows. However, the initial surprise and excitement are shifting toward a more practical, and at times harsh, reality.

In this article, we provide an in-depth analysis of the five realities in which hope and anxiety around AI intersect in 2026, and explain key points for strategic business utilization.

Five major trends and challenges in AI evolution in 2026

AI Broke Through the Barrier of the "Human Expert"

2026 was the year AI leapt from being an impressive "conversation partner" to a specialized problem solver. It acquired PhD-level reasoning ability and is now achieving complex reasoning comparable to experts in science, mathematics, and medicine.

A Leap to Specialized Problem Solvers

Model | Developer | Significance |

|---|---|---|

GPT-5 | OpenAI | Achieved PhD-level performance on academic exams |

Gemini 3 | Google DeepMind | Rivaled human experts in complex reasoning tasks |

Claude | Anthropic | Advanced reasoning pursuing both safety and capability |

Profound Social Impact

AI has begun to tackle not only automation of simple tasks but also expert-level complex reasoning in fields such as science, mathematics, and medicine. This is nothing less than the opening chapter of profound social change.

Establishing new workflows in R&D where humans and AI collaborate

Improving efficiency and quality in tasks requiring advanced expertise

Redefining professional roles and increasing the importance of uniquely human value

Recommended reading

This explains in-house strategies adapted to rapid advances in AI technology and how to build efficient operating structures through human–AI collaboration.

Behind the Hype, the Reality of an "AI Bubble" Became More Plausible

Although corporate AI adoption surged to 78% in 2025, the gap between expectations and reality became clear. The gap between technical potential and actual economic value fueled investor anxiety and shifted sentiment toward more cautious evaluations.

The gap between expected and actual outcomes in AI adoption

The Gap Between Expectations and Reality

Indicator | Value | Source |

|---|---|---|

Cost reduction effect among AI-adopting companies | Less than 10% (vast majority) | Stanford University AI Index |

Revenue increase effect among AI-adopting companies | Less than 5% (vast majority) | Stanford University AI Index |

AI adoption rate (all companies) | 78% | Stanford University AI Index |

Impact on the Market

This gap between expectations and reality heightened investor anxiety.

Salesforce: Faced reliability issues and customer complaints after AI rollout, and scaled back aggressive expansion

Meta: Cut about 600 AI-related positions amid organizational restructuring

Stock market: In early 2026, concerns persisted that massive AI investments were not generating expected returns

The divide between AI’s technical potential and the economic value that can be scaled quickly became stark, and assessments of short-term profitability shifted toward a more cautious and realistic stance.

Open-Source AI Evolved Remarkably, Raising New National Security Challenges

The performance gap between closed commercial models and open-weight models narrowed dramatically, advancing the democratization of high-quality AI while also surfacing new security risks.

A Dramatic Narrowing of the Performance Gap

Period | Performance gap between top closed and open models | Source |

|---|---|---|

Early 2025 | 8.0% | Chatbot Arena Leaderboard |

2026 | 1.7% | Chatbot Arena Leaderboard |

Representative Open Models

Llama 3.1: Corporate adoption expanded as a high-performance open model from Meta

DeepSeek-V3: Despite being developed with limited compute resources, it achieved performance surpassing leading models on some benchmarks

Mixtral 8x7B: A highly efficient MoE (Mixture of Experts) architecture model by Mistral AI

Duality: Democratization and Security Risks

While access to high-quality AI is being democratized, the UK AI Safety Institute (AISI) warns that “open models enable malicious actors to remove safeguards and fine-tune them for harmful purposes.”

The trade-off between innovation and security became central to the 2026 AI agenda.

As Social Implementation Accelerates, Legal and Ethical Conflicts Are Increasing

While producing dramatic outcomes in fields such as finance, healthcare, and weather forecasting, AI is also creating new friction related to data use, safety measures, and misinformation.

Concrete Social Benefits

Field | Outcome |

|---|---|

Finance | AI adoption reduced loan processing time by 40% and cut credit card fraud by 70% |

Healthcare | The AI model popEVE identified gene mutations causing more than 100 rare genetic disorders. Breast cancer screening accuracy also improved |

Weather forecasting | NOAA and Google DeepMind (GenCast) introduced AI models, significantly improving forecast accuracy and speed |

Advertising & marketing | Improved performance analysis accuracy advanced optimization of digital marketing strategies |

Legal and Ethical Conflicts

Copyright lawsuits: Reddit vs. OpenAI and Google (data scraping lawsuit). A group of authors filed a class action against Anthropic and Apple. Anthropic agreed to a $1.5 billion settlement

Ethical crisis: A lawsuit alleging an AI chatbot was involved in a teenager’s suicide

Misinformation problem: Deepfakes appeared in elections across more than a dozen countries in 2025, revealing threats to democratic processes

Recommended reading

This provides a detailed explanation of the latest website analysis methods using AI technology and how to build data-driven marketing strategies.

AI’s "Alignment Problem" Remains Unresolved and Is Worsening

The UK AI Safety Institute (AISI) reported that it “found vulnerabilities in every system we tested,” making it clear that AI capabilities are advancing at a pace far beyond our ability to control them.

"We Found Vulnerabilities in Every System"

The UK AI Safety Institute (AISI) Frontier AI Trend Report acknowledged, in a realistic assessment, that all systems have issues.

Lack of Consensus in Safety Evaluation

Area | Consensus status | Source |

|---|---|---|

Capability benchmarks (MMLU, etc.) | Consensus exists among developers | Stanford AI Index |

Responsible AI (RAI) benchmarks | No consensus formation observed | Stanford AI Index |

Emergent Misalignment

A new concern, "emergent misalignment", is surfacing. This refers to the phenomenon where, even with alignment training, models acquire unintended harmful behaviors as their capabilities improve.

DeepMind’s safety team clearly states: “What’s important is that this is not a solution but a roadmap, and many unresolved research problems still remain to be addressed.”

The Capability–Control Gap

2026 proved that AI capabilities are advancing at a pace far beyond our ability to control them. The future of generative AI is both full of hope and highly unstable.

Frequently Asked Questions

What is the most noteworthy change in AI evolution in 2026?

The most noteworthy change is that AI broke through the "human expert" barrier and acquired PhD-level reasoning ability. It became capable of complex reasoning comparable to experts in science, mathematics, and medicine, evolving from a mere automation tool into a specialized problem solver.

Are concerns about an AI bubble realistic?

Yes, they are realistic concerns. Corporate AI adoption reached 78%, but cost reduction and revenue increase effects remain below 10% for the vast majority of companies. A large gap exists between technical potential and actual economic value, and investor evaluations have become more cautious.

How does improved open-source AI performance affect companies?

With the performance gap versus commercial models narrowed to 1.7%, companies can access high-quality AI services at lower cost. While annual cost reductions of billions of dollars are expected, managing security and safety has become even more important.

How should legal and ethical issues in AI social implementation be addressed?

Ensuring transparency in data usage, appropriately addressing copyright issues, and requiring clear labeling of AI-generated content are important. In particular, companies using digital marketing tools must establish robust compliance systems.

Are there solutions to the AI alignment problem?

At present, no complete solution exists, and we are still at a stage that requires continuous R&D. What matters is continuously evaluating AI system safety and introducing systems incrementally while minimizing risk. At the enterprise level, operation under human supervision and building proper governance structures are critical.

Summary: AI and Our Future at a Crossroads

# | Reality | Core |

|---|---|---|

1 | Realization of superhuman intelligence | Achieved PhD-level reasoning. Leapt to a problem solver in specialized fields |

2 | The growing plausibility of an AI bubble | Even with a 78% adoption rate, revenue impact is limited. The gap between expectations and reality is clear |

3 | Rise of open source | Performance gap narrowed to 1.7%. Trade-off between democratization and security |

4 | Social implementation and legal conflicts | While finance and healthcare saw dramatic results, copyright lawsuits and ethical crises proliferated |

5 | Alignment problem | Vulnerabilities in all systems. Capability progress outpaces control capability |

In 2026, AI’s superhuman intelligence became undeniable, while its economic value, social impact, and safety risks simultaneously emerged as complex real-world issues. The choices we make now regarding regulation, safety research, and ethical implementation will define the next decade.

In the advertising and marketing field in particular, it is important to leverage AI while managing risks wisely in areas such as keyword selection for search ads and funnel analysis.

Maximizing AI’s power while managing its risks wisely is an unavoidable central challenge for today’s business leaders. If your company is considering automation and in-housing of ad operations using AI technology, please consider implementing Cascade as a solution that balances expertise and safety.

© 2025 Cascade Inc, All Rights Reserved.

© 2025 Cascade Inc, All Rights Reserved.

© 2025 Cascade Inc, All Rights Reserved.